~ Synchronizing Multimodal Recordings Using Audio-To-Audio Alignment - In Journal on Multimodal User Interfaces

» By Joren on Thursday 06 August 2015The article titled “Synchronizing Multimodal Recordings Using Audio-To-Audio Alignment” by Joren Six and Marc Leman has been accepted for publication in the Journal on Multimodal User Interfaces. The article will be published later this year. It describes and tests a method to synchronize data-streams. Below you can find the abstract, pointers to the software under discussion and an author version of the article itself.

Synchronizing Multimodal Recordings Using Audio-To-Audio Alignment

An Application of Acoustic Fingerprinting to Facilitate Music Interaction Research

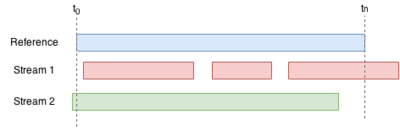

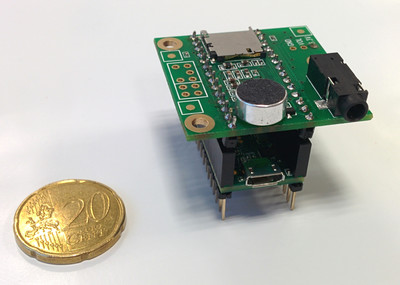

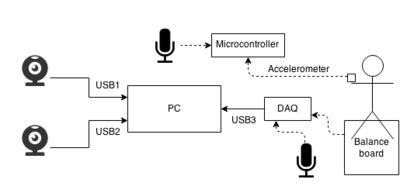

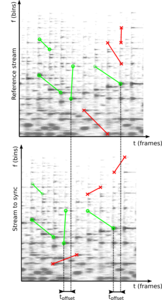

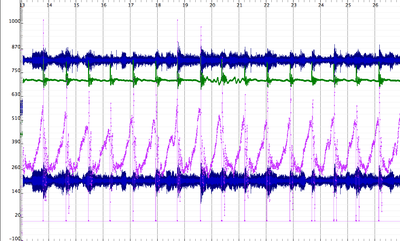

Abstract: Research on the interaction between movement and music often involves analysis of multi-track audio, video streams and sensor data. To facilitate such research a framework is presented here that allows synchronization of multimodal data. A low cost approach is proposed to synchronize streams by embedding ambient audio into each data-stream. This effectively reduces the synchronization problem to audio-to-audio alignment. As a part of the framework a robust, computationally efficient audio-to-audio alignment algorithm is presented for reliable synchronization of embedded audio streams of varying quality. The algorithm uses audio fingerprinting techniques to measure offsets. It also identifies drift and dropped samples, which makes it possible to find a synchronization solution under such circumstances as well. The framework is evaluated with synthetic signals and a case study, showing millisecond accurate synchronization.

To read the article, consult the author version of Synchronizing Multimodal Recordings Using Audio-To-Audio Alignment. The data-set used in the case study is available here. It contains a recording of balanceboard data, accelerometers, and two webcams that needs to be synchronized. The final publication is available at Springer via 10.1007/s12193-015-0196-1

The algorithm under discussion is included in Panako an audio fingerprinting system but is also available for download here. The SyncSink application has been packaged separately for ease of use.

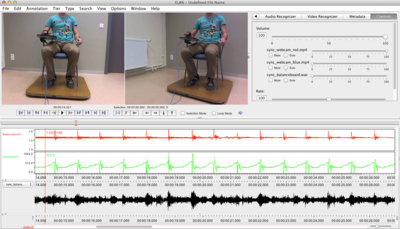

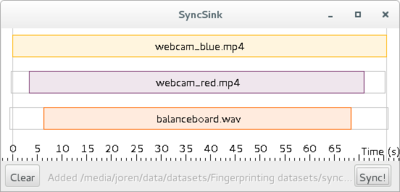

To use the application start it with double click the downloaded SyncSink JAR-file. Subsequently add various audio or video files using drag and drop. If the same audio is found in the various media files a time-box plot appears, as in the screenshot below. To add corresponding data-files click one of the boxes on the timeline and choose a data file that is synchronized with the audio. The data-file should be a CSV-file. The separator should be ‘,’ and the first column should contain a time-stamp in fractional seconds. After pressing Sync a new CSV-file is created with the first column containing correctly shifted time stamps. If this is done for multiple files, a synchronized sensor-stream is created. Also, ffmpeg commands to synchronize the media files themselves are printed to the command line.

This work was supported by funding by a Methusalem grant from the Flemish Government, Belgium. Special thanks goes to Ivan Schepers for building the balance boards used in the case study. If you want to cite the article, use the following BiBTeX:

@article{six2015multimodal,

author = {Joren Six and Marc Leman},

title = {{Synchronizing Multimodal Recordings Using Audio-To-Audio Alignment}},

issn = {1783-7677},

volume = {9},

number = {3},

pages = {223-229},

doi = {10.1007/s12193-015-0196-1},

journal = {{Journal of Multimodal User Interfaces}},

publisher = {Springer Berlin Heidelberg},

year = 2015

}