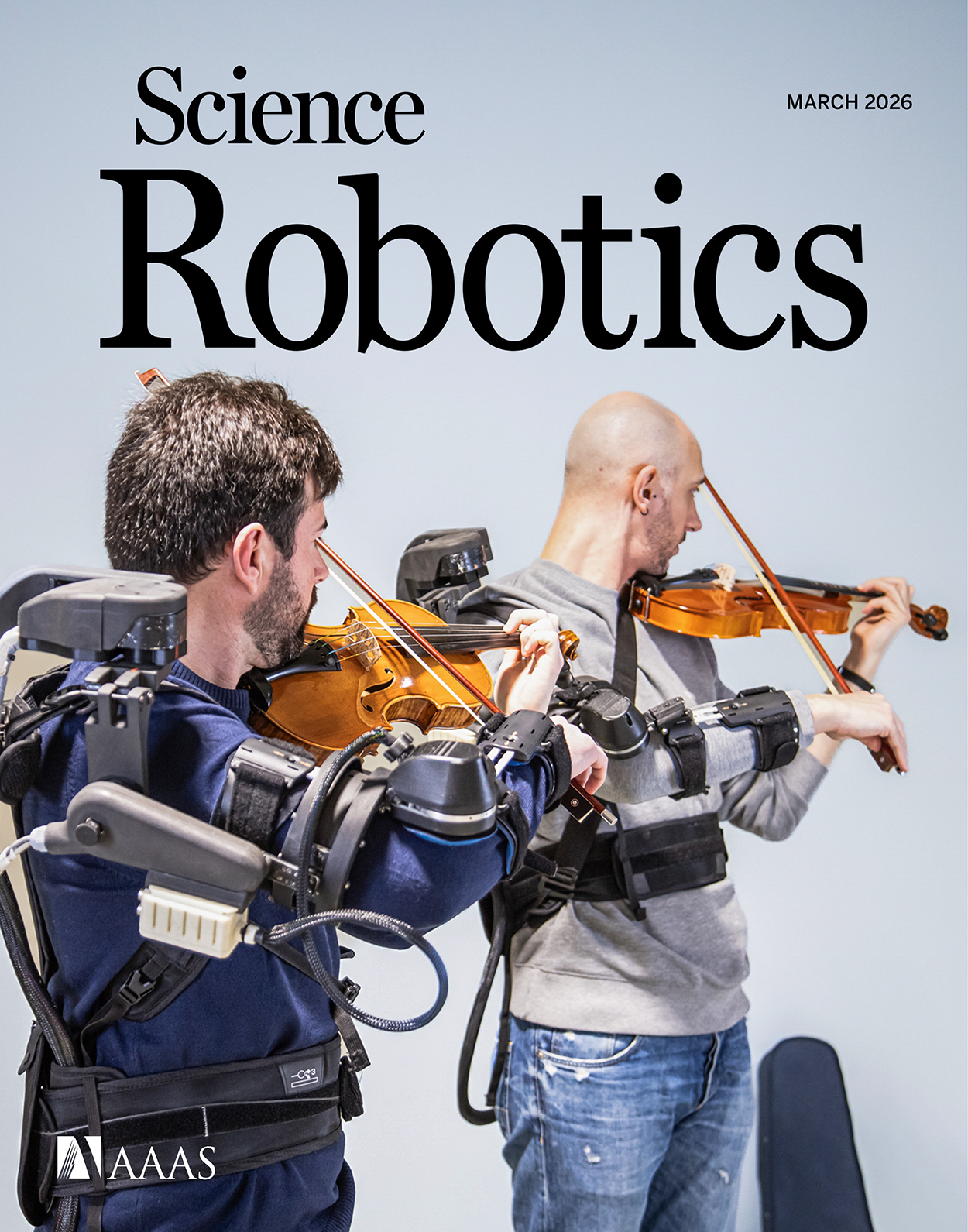

~ Robot-mediated haptic feedback outperforms vision in violin duo coordination

» By Joren on Thursday 19 March 2026 A couple of former colleagues have just published the article Robot-mediated haptic feedback outperforms vision in violin duo coordination in Science Robotics. The article is about providing guidance to violin players with an exoskeleton. Congratulations Alexandra, Mark and Canan!

A couple of former colleagues have just published the article Robot-mediated haptic feedback outperforms vision in violin duo coordination in Science Robotics. The article is about providing guidance to violin players with an exoskeleton. Congratulations Alexandra, Mark and Canan!

It is the result of an European research project in which I was involved in only at the start. I did, however, also contribute to the study in a small but crucial way. During the experiment, the exoskeletons suddenly stopped working. I was asked to have a look and see if there was something to be done. I was a bit hesitant to go near these unique, expensive prototypes with a soldering iron but, after a couple of tense minutes and fixing some - easy to access - connections the arms came back alive. The experiment could continue and everybody started clapping and cheering. This is at least how I remember it. Perhaps that last part is not entirely accurate. Anyhow, I made it into the acknowledgments; thanks Ola.

Abstract Joint actions among humans rely on the integration of multiple sensory modalities, most notably auditory and visual cues, which support explicit communication between partners. However, haptic feedback provides a direct, implicit channel for sensorimotor communication, and its contribution to fine motor coordination in joint actions remains largely unexplored. Here, we demonstrate that haptic communication, rendered through bidirectionally coupled wearable robots, outperforms traditional auditory-visual feedback in a complex and challenging real-life joint action: ensemble violin performance. First, we developed a pair of two–degree-of-freedom upper-limb exoskeletons capable of transparently following violinists’ natural movements and rendering viscoelastic torques proportional to the joint angular deviation between the partners. Then, we designed a within-subject experiment with 20 violin duos performing a musical piece under four sensory feedback conditions: auditory (A), auditory-visual (AV), auditory-haptic (AH), and auditory-visual-haptic (AVH), across two tempi (72 and 100 beats per minute). Despite the musicians being unfamiliar with the robot-mediated haptic feedback and unaware of the bidirectional connection between them, haptic feedback (AH and AVH) substantially enhanced spatiotemporal coordination and dynamic musical alignment compared with the extensively trained auditory-visual feedback (A and AV). The multisensory feedback condition AVH yielded the highest scores across all measures. Our findings demonstrate that haptic feedback can support fine motor coordination in violin duo performance more effectively than visual cues, particularly for professional musicians, because of its implicit and embodied nature, and that it can be effectively delivered via wearable robots, expanding the paradigms of human-human sensorimotor interactions.